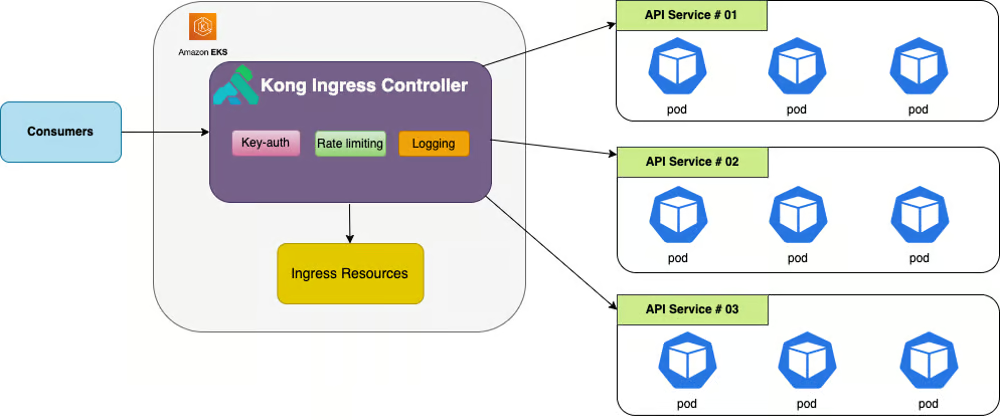

In the ever-evolving landscape of cloud-native applications, managing APIs efficiently is crucial. An API Gateway plays a pivotal role as a bridge between clients and backends, orchestrating requests and responses while handling a myriad of tasks such as CORS validation, TLS termination, authentication, and more. In this blog post, we embark on a journey into cloud-native API management with Kong Gateway deployed on an AWS EKS cluster.

Understanding the Role of an API Gateway

At its core, an API Gateway acts as a proxy, mediating communication between clients and backend services. It streamlines the process by handling various tasks in transit, including CORS validation, TLS termination, JWT authentication, header injection, session management, response transformation, rate-limiting, ACLs, and much more. This intermediary layer ensures seamless and secure interactions within a microservices architecture.

Introducing Kong Gateway

Developed by KongHQ, Kong Gateway stands out as a lightweight and decentralized API Gateway solution. Operating as a Lua application within NGINX and distributed with OpenResty, Kong Gateway sets the stage for modular extensibility through a rich ecosystem of plugins. Whether your API management needs are basic or complex, Kong Gateway provides a scalable and versatile solution.

The Evolution: DBLess Kong Gateway

Traditionally, Kong Gateway configurations, including routes, services, and plugins, were stored in a database. However, the landscape shifted with the advent of “DBLess” Kong Gateway, also known as the “declarative” method. In this mode, configuration management shifts entirely to code, typically saved as a declarative.yaml file. This paradigm shift brings about several advantages:

1. Version Control:

Configuration becomes easily versionable, enabling seamless collaboration and tracking changes over time.

2. Simplicity and Agility:

Eliminating the need for a separate database streamlines deployment and enhances agility in managing configurations.

3. Infrastructure as Code (IaC):

The move towards a code-centric approach aligns with the principles of Infrastructure as Code, promoting consistency and reproducibility.

4. Maintenance Ease:

With configurations stored as code, the need for maintaining a separate database diminishes, simplifying the overall maintenance process.

Deploying Kong Gateway on AWS EKS

Now that we grasp the significance of Kong Gateway and the advantages of the DBLess approach, let’s delve into the process of deploying Kong Gateway on an AWS EKS cluster.

Declarative Configuration (prd/declarative.yaml): Specifies the Kong services, routes, and associated plugins using a DBLess approach.

_format_version: "2.1"

_transform: true

services:

- name: invoicing-matrics

url: https://prdawsinvoicing.example.com/actuator/

routes:

- paths:

- /metrics

methods:

- GET

- POST

- PUT

strip_path: true

protocols:

- https

- http

hosts:

- k-prd.devopsmonirul.tech

- name: businessCatalog

url: https://prdawscatalogos.example.com/catalogs/v1.0/catalogs/catalogs/businessCatalog

routes:

- paths:

- /catalogs/businessCatalog

methods:

- GET

- POST

- PUT

strip_path: true

protocols:

- https

- http

hosts:

- k-prd.devopsmonirul.tech

- name: usermanagment

url: https://prdawsusermanagment.example.com/usermanagement/v1.0/users

routes:

- paths:

- /usermanagement

methods:

- GET

- POST

- PUT

strip_path: true

protocols:

- https

- http

hosts:

- k-prd.devopsmonirul.tech

- name: ocr

url: https://prdawsocr.example.com/ocr/v1.0/actuator

routes:

- paths:

- /ocr

methods:

- GET

- POST

- PUT

strip_path: true

protocols:

- https

- http

hosts:

- k-prd.devopsmonirul.tech

#------------------------------------------------- CORS Plugin ----------------------------------------------

plugins:

- name: prometheus

- name: cors

service: invoicing-matrics

config:

origins:

- https://controller-ai.com

- https://www.example.com

- https://monirul.digital

- https://example.com

- http://local.devopsmonirul.com:4200

- "*"

methods:

- GET

- POST

- PUT

credentials: false

max_age: 3600

preflight_continue: false

- name: cors

service: businessCatalog

config:

origins:

- "*"

methods:

- GET

- POST

credentials: false

max_age: 3600

preflight_continue: false

- name: cors

service: usermanagment

config:

origins:

- "*"

methods:

- GET

- POST

- PUT

credentials: false

max_age: 3600

preflight_continue: false

- name: cors

service: ocr

config:

origins:

- "*"

methods:

- GET

- POST

- PUT

credentials: false

max_age: 3600

preflight_continue: false

Helm Configuration (prd/kong.yaml):

Helm chart configurations for Kong deployment, including resource limits, ingress controller settings, environment variables, and autoscaling parameters.

deployment:

serviceAccount:

create: false

podAnnotations:

"cluster-autoscaler.kubernetes.io/safe-to-evict": "true"

resources:

limits:

memory: 1Gi

requests:

cpu: 500m

memory: 1Gi

ingressController:

enabled: false

installCRDs: false

env:

database: "off"

nginx_worker_processes: "2"

proxy_access_log: /dev/stdout json_analytics

proxy_error_log: /dev/stdout

log_level: "error"

trusted_ips: "0.0.0.0/0,::/0"

headers: "off"

anonymous_reports: "off"

admin_listen: "off"

status_listen: 0.0.0.0:8100

nginx_http_log_format: |

json_analytics escape=json '{"msec": "$msec", "status": "$status", "request_uri": "$request_uri", "geoip_country_code": "$http_x_client_region", "client_subdivision": "$http_x_client_subdivision", "client_city": "$http_x_client_city","client_city_latlong": "$http_x_client_citylatlong", "connection": "$connection", "connection_requests": "$connection_requests", "pid": "$pid", "request_id": "$request_id", "request_length": "$request_length", "remote_addr": "$remote_addr", "remote_user": "$remote_user", "remote_port": "$remote_port", "time_local": "$time_local", "time_iso8601": "$time_iso8601", "request": "$request", "args": "$args", "body_bytes_sent": "$body_bytes_sent", "bytes_sent": "$bytes_sent", "http_referer": "$http_referer", "http_user_agent": "$http_user_agent", "http_x_forwarded_for": "$http_x_forwarded_for", "http_host": "$http_host", "server_name": "$server_name", "request_time": "$request_time", "upstream": "$upstream_addr", "upstream_connect_time": "$upstream_connect_time", "upstream_header_time": "$upstream_header_time", "upstream_response_time": "$upstream_response_time", "upstream_response_length": "$upstream_response_length", "upstream_cache_status": "$upstream_cache_status", "ssl_protocol": "$ssl_protocol", "ssl_cipher": "$ssl_cipher", "scheme": "$scheme", "request_method": "$request_method", "server_protocol": "$server_protocol", "pipe": "$pipe", "gzip_ratio": "$gzip_ratio", "http_cf_ray": "$http_cf_ray", "trace_id": "$http_x_b3_traceid", "proxy_host": "$proxy_host"}'

admin:

enabled: true

http:

enabled: false

tls:

enabled: true

proxy:

type: NodePort

tls:

enabled: false

autoscaling:

enabled: true

minReplicas: 1

maxReplicas: 11

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 60

- type: Resource

resource:

name: memory

target:

type: Utilization

averageUtilization: 80

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

- key: kubernetes.io/arch

operator: In

values:

- amd64

- arm64

- key: eks.amazonaws.com/compute-type

operator: NotIn

values:

- fargate

Vertical Pod Autoscaler Configuration (prd/vpa.yaml): Configuration for the Vertical Pod Autoscaler, which adjusts resource requests and limits for pods based on their usage.

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: prd-kong-vpa

namespace: prd-kong

spec:

targetRef:

apiVersion: "apps/v1"

kind: Deployment

name: prd-kong

updatePolicy:

updateMode: "Off"

Ingress Configuration (prd/ingress.yaml): Configuration for the Ingress resource, specifying rules and annotations for AWS ALB.

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: prd-kong-ingress

namespace: prd-kong

annotations:

alb.ingress.kubernetes.io/actions.ssl-redirect: '{"Type": "redirect", "RedirectConfig":

{ "Protocol": "HTTPS", "Port": "443", "StatusCode": "HTTP_301"}}'

alb.ingress.kubernetes.io/certificate-arn: arn:aws:acm:eu-west-1:234366607644:certificate/c25d25f3-78ae-4197-a806-1882f6b947dc

alb.ingress.kubernetes.io/listen-ports: '[{"HTTP": 80}, {"HTTPS":443}]'

alb.ingress.kubernetes.io/scheme: internet-facing

alb.ingress.kubernetes.io/success-codes: 200,404,301,302

alb.ingress.kubernetes.io/target-type: ip

spec:

ingressClassName: alb

rules:

- host: k-prd.devopsmonirul.tech

http:

paths:

- path: /*

pathType: ImplementationSpecific

backend:

service:

name: prd-kong-proxy

port:

number: 80

Common Scripts (scripts/common.sh): Shell script with functions for common tasks, such as printing colored text, checking the existence of commands, and defining an exit strategy.

#!/usr/bin/env bash

set -euo pipefail

export HELM_VERSION="v3.7.2"

function red() {

local text="${1:- }"

echo -e "\033[31m${text}\033[0m"

}

function green() {

local text="${1:- }"

echo -e "\033[32m${text}\033[0m"

}

function yellow() {

local text="${1:- }"

echo -e "\033[33m${text}\033[0m"

}

function blue() {

local text="${1:- }"

echo -e "\033[36m${text}\033[0m"

}

function exists() {

command -v "${1:-}" > /dev/null 2>&1

}

function graceful_exit() {

red "${1:- }"

exit 1

}

function bastion_command() {

local command="${1:- }"

if [[ -z "${command}" ]]; then

error_exit "Command can't be empty."

else

gcloud compute ssh --project "${GCP_PROJECT_ID}" --zone "${GCP_ZONE_ID}" "${GCP_USER}@${GCP_SERVER_NAME}" --tunnel-through-iap --command="${1}"

fi

}

function install_kubectl() {

if exists /usr/local/bin/kubectl; then

echo "Kubectl is installed already"

else

curl -o kubectl https://s3.us-west-2.amazonaws.com/amazon-eks/1.23.13/2022-10-31/bin/linux/amd64/kubectl

chmod +x ./kubectl

mkdir -p $HOME/bin && cp ./kubectl $HOME/bin/kubectl && export PATH=$PATH:$HOME/bin

cp ./kubectl /usr/local/bin/kubectl

kubectl version --short --client

fi

}

function install_helm() {

if exists /usr/local/bin/helm; then

echo "Helm is installed already"

else

curl https://raw.githubusercontent.com/helm/helm/master/scripts/get-helm-3 | bash -s -- --version "${HELM_VERSION}"

fi

}

function setup_aws_auth() {

aws eks update-kubeconfig --name "${AWS_PROJECT_ID}" --region "${AWS_LOCATION}" --profile "${CLUSTER_NAME}"

}

Setup Kong Script (scripts/setup-kong.sh): Script for installing kubectl, Helm, adding the Kong Helm repo, setting up AWS authentication, creating namespaces, applying VPA configuration, and deploying Kong using Helm.

#!/usr/bin/env bash

set -euo pipefail

cd "$(dirname "$0")/.."

source scripts/common.sh

green "Installing Kubectl"

install_kubectl

green "Installing helm version => ${HELM_VERSION}"

install_helm

green "Setting up Kong Helm Repo"

helm repo add kong https://charts.konghq.com

helm repo update

green "Setting up AWS Auth"

setup_aws_auth

green "Creating Namespace => ${KONG_NAME}-kong"

kubectl create namespace "${KONG_NAME}"-kong --dry-run=client -o yaml | kubectl apply -f -

green "Set the current namespace"

kubectl config set-context --current --namespace=${KONG_NAME}-kong

green "Setting up VPA Config => ${KONG_NAME}-kong-vpa"

kubectl apply -f ${KONG_NAME}/vpa.yaml || true

if [[ "${KONG_NAME}" == prd ]]; then

green "Setting up Ingress => ${KONG_NAME}-kong-ingress"

kubectl apply -f ${KONG_NAME}/ingress.yaml

fi

green "Setting up Kong => ${KONG_NAME}"

helm upgrade \

--install \

"${KONG_NAME}" \

kong/kong \

--namespace "${KONG_NAME}"-kong \

--create-namespace \

-f "${KONG_NAME}"/kong.yaml \

--set-file dblessConfig.config="${KONG_NAME}"/declarative.yaml \

--version 2.6.3 \

--wait \

--debug

Validate Kong Script (scripts/validate-kong.sh): Script for validating the setup, including Helm diff, VPA configuration, and Ingress configuration (for prd environment).

#!/usr/bin/env bash

set -euo pipefail

cd "$(dirname "$0")/.."

source scripts/common.sh

green "Installing Kubectl"

install_kubectl

green "Installing helm version => ${HELM_VERSION}"

install_helm

green "Setting up Kong Helm Repo"

helm repo add kong https://charts.konghq.com

helm repo update

green "Installing Helm Diff Plugin"

helm plugin install https://github.com/databus23/helm-diff || true

green "Setting up AWS Auth"

setup_aws_auth

green "Set the current namespace"

kubectl config set-context --current --namespace=${KONG_NAME}-kong

green "Validating VPA Config => ${KONG_NAME}-kong-vpa"

kubectl diff -f ${KONG_NAME}/vpa.yaml || true

if [[ "${KONG_NAME}" == prd ]]; then

green "Validating Ingress => ${KONG_NAME}-kong-ingress"

kubectl diff -f ${KONG_NAME}/ingress.yaml || true

fi

green "Validating Kong => ${KONG_NAME}"

helm diff upgrade \

--install \

"${KONG_NAME}" \

kong/kong \

--namespace "${NAMESPACE}" \

-f ${KONG_NAME}/kong.yaml \

--set-file dblessConfig.config=${KONG_NAME}/declarative.yaml \

--version 2.6.3

GitLab CI Configuration (.gitlab-ci.yml): CI/CD pipeline configuration for validating and deploying Kong in the prd environment.

For validations job,

validate-kong-prd:

stage: validate-kong-prd

environment: kong-prd

variables:

ENV: prd

KONG_NAME: prd

AWS_PROJECT_ID: monirul-digital

CLUSTER_NAME: monirul

AWS_LOCATION: eu-west-1

NAMESPACE: ${KONG_NAME}-kong

extends: [ .common-dependencies ]

script:

- aws eks update-kubeconfig --name $AWS_PROJECT_ID --region $AWS_LOCATION --profile $CLUSTER_NAME

- ./scripts/validate-kong.sh

only:

refs:

- master

- merge_requests

changes:

- prd/**/*

allow_failure: false

For Deployment Job,

deploy-helm-kong-prd:

stage: deploy-kong-prd

environment: kong-prd

variables:

ENV: prd

KONG_NAME: prd

AWS_PROJECT_ID: monirul-digital

CLUSTER_NAME: monirul

AWS_LOCATION: eu-west-1

NAMESPACE: ${KONG_NAME}-kong

extends: [ .common-dependencies ]

script:

- aws eks update-kubeconfig --name $AWS_PROJECT_ID --region $AWS_LOCATION --profile $CLUSTER_NAME

- ./scripts/setup-kong.sh

only:

refs:

- master

changes:

- prd/**/*

allow_failure: false

when: manual

The deployment setup appears to be well-organized and follows best practices for deploying Kong on AWS EKS. The use of GitLab CI/CD enhances automation and ensures consistent deployments.

Next Steps:

- Ensure that your deployment scripts and configurations align with your specific requirements and AWS EKS environment.

- Monitor Kong Gateway’s performance, logs, and metrics in the AWS EKS cluster to identify and address any issues.

- Consider further optimizations or enhancements based on specific use cases or evolving requirements.

If you have any specific concerns or questions, feel free to ask!